Automated Vehicles (AVs) may appear lonesome. Focused on their onboard perception systems to perceive their environment. Interpreting their surroundings, they stumble upon the world read, only with the aid of their onboard devices. They perceive the world through cameras, lidars, and radars to find their way through everyday traffic.

But what if AVs had someone to talk to? What if AVs could benefit from information, shared by exchanging data? – It’s happening!

Via vehicle-to-everything (V2X) communication, AVs can share information about themselves and their perceived surroundings. Others can receive this information and can be aware of the environment beyond their onboard Field of View (FOV). It is much like we talk to each other and describe the world we live in. From a technical perspective we call the sharing of such information Collective Perception and the integration of such information Cooperative Awareness.

Cooperative Perception and Cooperative Awareness go hand in hand to extend the FOV of an automated vehicle. Within the SELFY project, the Situational Awareness and Collective Perception (SACP) team works on methods and tools to integrate and improve this functionality.

At SELFY, we tackle the aggregation and fusion of onboard data to provide a single onboard perception from multiple sensors and deal with relaying information between vehicles and infrastructure.

You think this sounds like KITT suddenly has friends to talk to? Everything is Sci-Fi and only works on paper? – Let’s see.

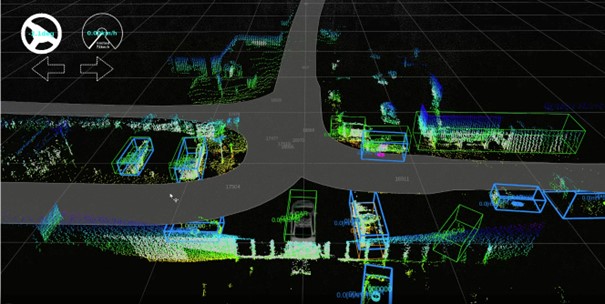

Look at the video of our live demonstration on the Data Aggregation and Fusion Tool. All the colored dots are the lidar output of the ego vehicle, which is faintly visible in gray. The darker grey areas are the intersection, which the ego vehicle knows as the map.

Our ego vehicle perceives its surrounding and AI detection algorithms detect other vehicles and pedestrians. Vehicles and Vulnerable Road Users (VRUs) detected by the ego vehicle have a blue bounding box. The Other vehicle – our communicating friend – is standing right beside us, sending out its own position, which is highlighted as an orange bounding box. Our friend also tells us something about their perceived environment. These are the green bounding boxes.

View of on-board object detection(blue) and perceived objects via V2X (green/orange)

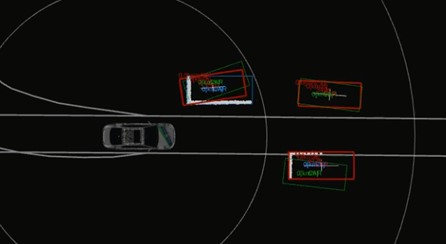

When the information is received by our communication partners, the other AVs, they then must integrate the received information. We show the handling of information by fusing (red) the perception information via V2X (green) with our local information, the onboard perception (blue), we shared previously.

Fusion of on-board perception and received CPM

Did you know that traffic sensors and other infrastructure can talk too?

Intelligent intersections and other infrastructure are doing similar things. In this case, we call it traffic monitoring. Camera data is processed by our Traffic Monitoring Tool, while the lidar and optional radar data is processed by the Sensor Fusion & Anomaly Detection Tool. The latter tool is then responsible for fusing all sensor data and enriching environmental perception. The detected objects are then sent out via a (V2X) communication.

View of the traffic monitoring tool, tracking objects based on video data.

So, everyone talks to each other... What about trustworthiness and security?

With all chitchatting partners knowing more about their surroundings, how can we be sure that nobody is mistakenly sending incorrect information? Or beware, even lying to us.

Our Threat Evaluation Tool, for example, compares received V2X messages with local sensor data and locally detected objects to detect potential anomalies such as received ghost objects or defective sensors. Our Situational Assessment tool uses AI methods to compute the risk level of the current situation by considering environmental perception data, diagnostics data from the vehicle and the environment. If you are interested in that, our colleagues from security will talk about this in future blogs – so don’t miss them! 😉

Author: Virtual Vehicle Research